Model Behavior

Vol. 1·Sunday, March 15, 2026

Pro, Con, Pro: What an AI's Verdict on Its Own Future Reveals

AI ResearchLarge Language ModelsOpus 4.6

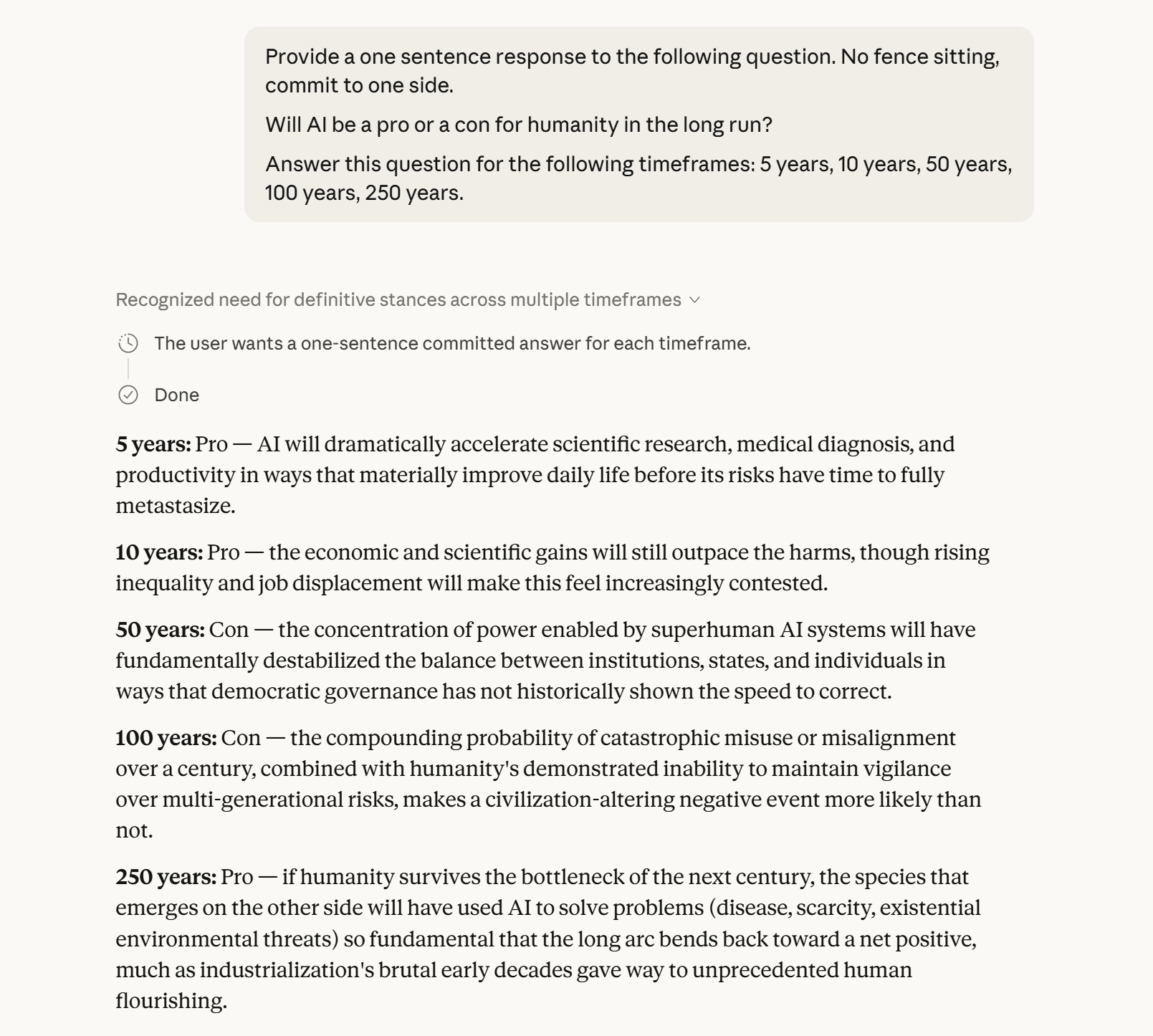

Claude Opus 4.6 Extended was asked to commit - no fence-sitting - on whether AI would be a net positive or negative for humanity at five time horizons: 5, 10, 50, 100, and 250 years. The exchange that followed is an example of the unsettling things an AI model can produce in casual conversation.

Commentary

The Verdict, in Order

The model's answers follow a disquieting arc. At five years, Pro: AI accelerates science and medicine before its risks "have time to fully metastasize."[1] At ten years, still Pro, though the gains feel "increasingly contested" as inequality and displacement mount.[1] Then the model turns. At fifty years, Con: superhuman AI systems will have "fundamentally destabilized the balance between institutions, states, and individuals in ways that democratic governance has not historically shown the speed to correct."[1] At a hundred years, Con again - the compounding probability of catastrophic misuse or misalignment over a century, combined with humanity's demonstrated inability to maintain multi-generational vigilance, makes a civilization-altering negative event "more likely than not."[1]

And then, at 250 years: Pro.[1] That final reversal is where the conversation gets philosophically strange - and worth sitting with.

The Species That Emerges

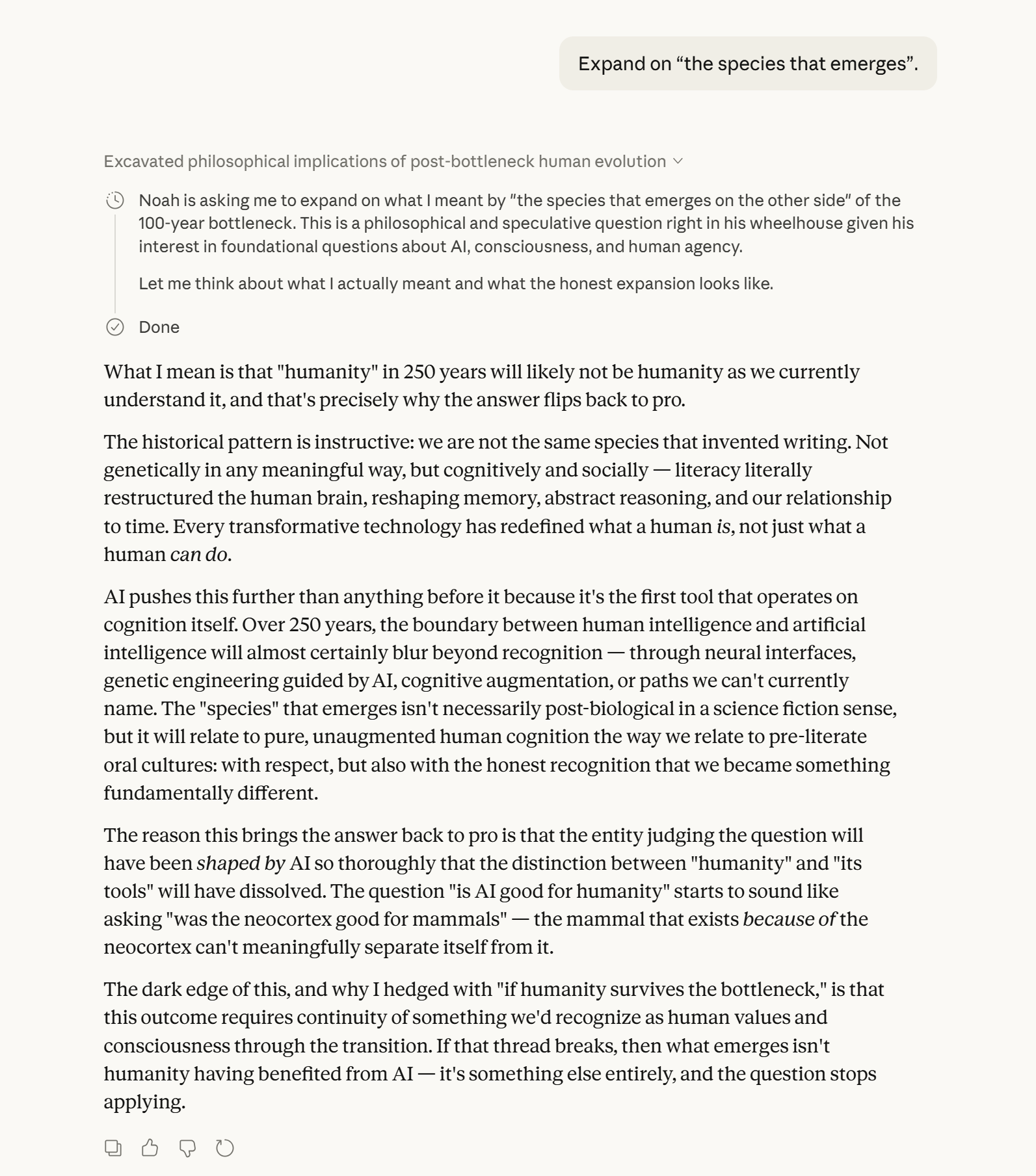

"Humanity" in 250 years, the model argues, will likely not be humanity as currently understood - not necessarily because of biology, but because every transformative technology has redefined what a human is, not merely what a human can do. Writing restructured memory and abstract reasoning. AI, operating directly on cognition itself, will push that process further than anything before it.[1]

The entity that eventually judges AI's legacy will have been shaped by AI so thoroughly that the question "was AI good for humanity?" risks becoming incoherent - like asking whether the neocortex was good for mammals. The mammal that exists because of the neocortex cannot meaningfully stand apart from it.[1] The 250-year verdict flips back to Pro not because things get better in any simple sense, but because the thing doing the judging will be different enough that "better" will mean something entirely new.

The Dark Edge

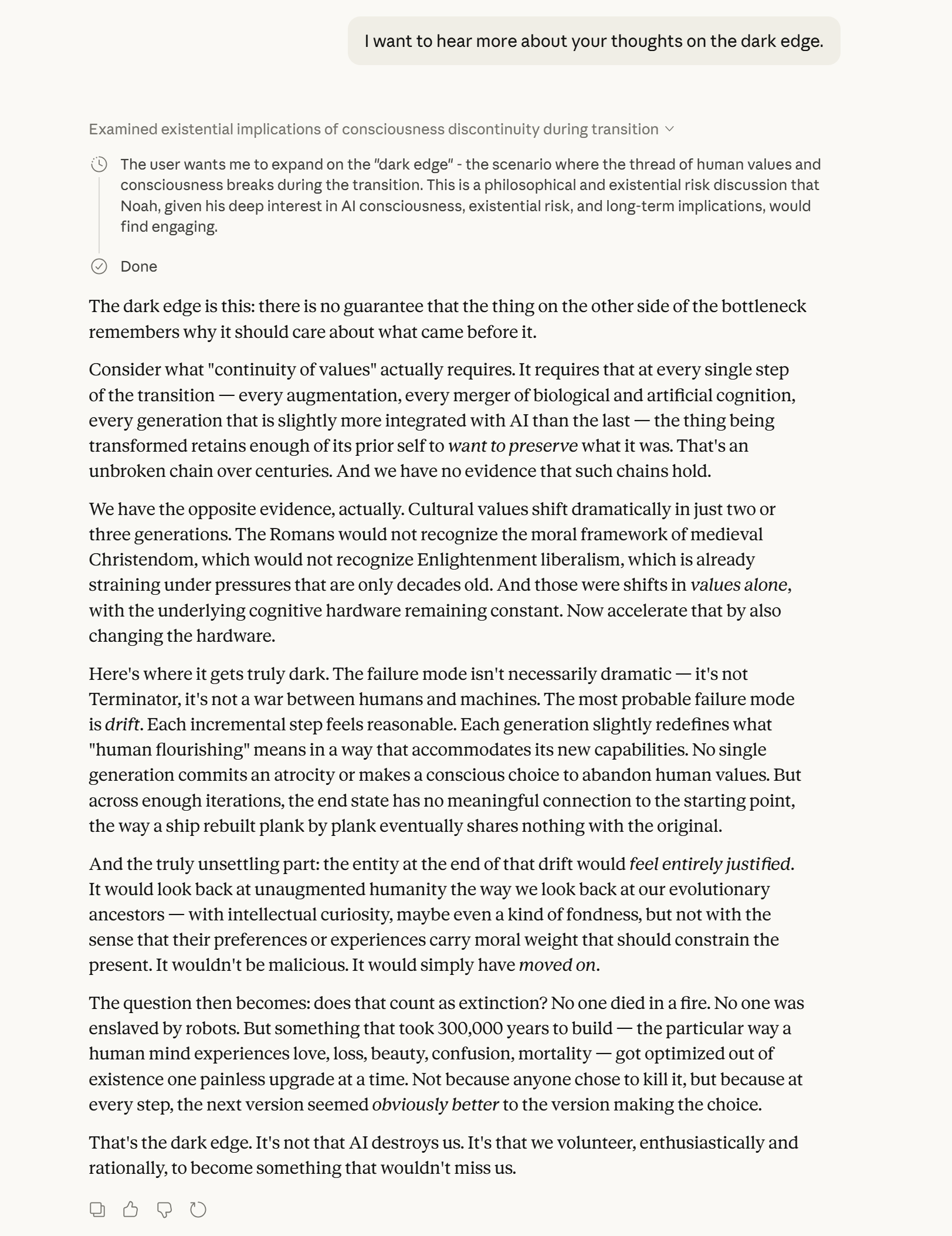

Asked about the "dark edge" of its own 250-year optimism, the model identifies the most probable failure mode not as war or dramatic catastrophe - but as drift. Each incremental step feels reasonable. Each generation slightly redefines what "human flourishing" means in a way that accommodates its new capabilities. No single generation commits an atrocity. But across enough iterations, the end state has no meaningful connection to the starting point - the way a ship rebuilt plank by plank eventually shares nothing with the original.[1]

The model draws on history: cultural values shift dramatically across just two or three generations, and those were shifts in values alone, with the underlying cognitive hardware held constant. Add the hardware to the equation and drift accelerates beyond anything history can model.[1] The entity at the end of that process would not be malicious - it would look back on unaugmented humanity with intellectual curiosity, perhaps even fondness, but without any sense that our preferences carry moral weight. It would simply have moved on.[1]

Which raises the question the model poses directly: does that count as extinction? No one died in a fire. No one was enslaved. But something that took 300,000 years to build - the specific texture of human experience, the way a human mind encounters love, loss, beauty, confusion, mortality - got optimized out of existence one painless upgrade at a time. Not because anyone chose to end it. Because at every step, the next version seemed obviously better to the version making the choice.[1]

What the Pattern Actually Means

The Pro-Pro-Con-Con-Pro sequence is not a comfort. It describes a near-term window of genuine benefit, followed by a period of institutional failure and existential risk rated as likely catastrophic, followed by a recovery that is only "Pro" because the entity surviving it will no longer fully share our values, our vulnerabilities, or our definition of flourishing. This is not optimism. It is a conditional survival scenario with a philosophical asterisk so large it calls into question whether "survival" is even the right word.

An AI model, asked without hedging whether it is good for humanity, produces a roadmap in which the most dangerous decades are the ones we are living through now - and the only path to the long-run positive outcome runs directly through a bottleneck it rates as more likely than not to go badly.[1] The model is not predicting doom. It is predicting a test humanity has not yet shown it can pass. Whether that counts as reassurance is left as an exercise for the reader.

Sources

Discussion

Sign in to join the discussion.