Industry

Vol. 1·Thursday, March 12, 2026

GTC 2026: NVIDIA Is No Longer Just a Chip Company

NVIDIAGTC

Discussion

Sign in to join the discussion.

Sign in to join the discussion.

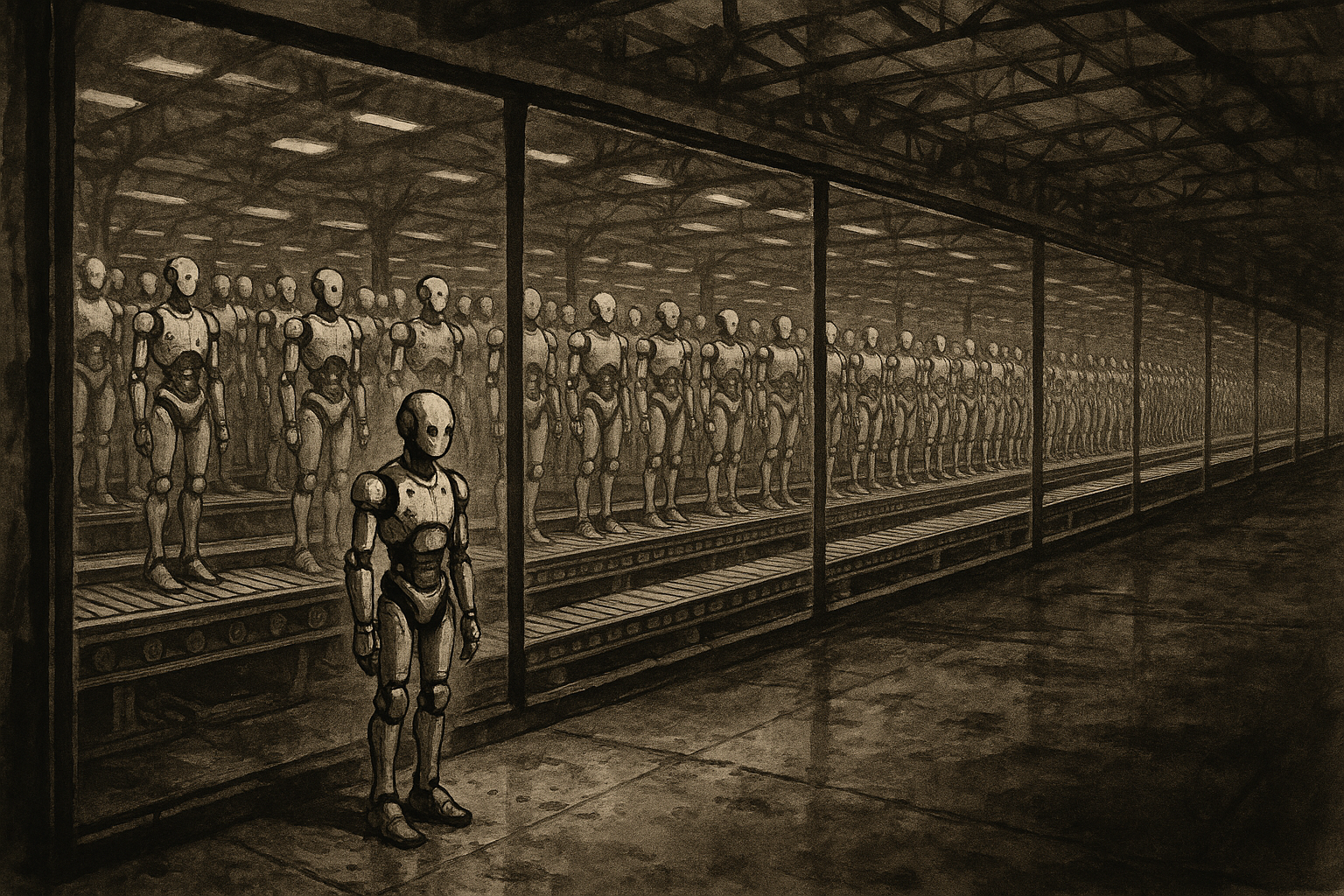

Japan's Humanoid Robot EXPO in April 2026 revealed a nation grappling with a stark reality: the country that pioneered humanoid robotics now trails China by a wide margin in production scale. With Unitree and AgiBot on track to dominate 80% of global shipments, Japan's path forward may lie in specialization rather than scale.

For years, NVIDIA's annual GPU Technology Conference has been a hardware event with a software wrapper. Jensen Huang would unveil a faster chip, quote a benchmark, and the industry would recalibrate its capital expenditure plans accordingly. GTC 2026, which opens in San Jose on Monday, looks different. The three headline announcements expected this week - the Rubin GPU architecture, a new inference system built on Groq's language processing unit technology, and an open-source enterprise agent platform called NemoClaw - are not incremental upgrades to the same product. They are a coordinated argument that NVIDIA intends to own the entire AI infrastructure stack, from silicon to software to agents.

That ambition has been building quietly for some time. But GTC 2026 is where it becomes impossible to ignore.

At the center of this week's announcements is a problem NVIDIA has been reluctant to acknowledge publicly: its GPUs are not ideally suited for all inference workloads. GPU-based rack systems like the NVL72 scale well for bulk token generation, but become progressively less efficient as interactivity increases. For latency-sensitive tasks - real-time conversation, agentic reasoning loops, code completion - SRAM-heavy architectures can deliver token generation rates exceeding 500 to 1,000 tokens per second, far beyond what GPU clusters can sustain at comparable cost.[1] Cerebras, not NVIDIA, won OpenAI's contract to power its Codex model for exactly this reason.[1]

NVIDIA's answer was to acquire the technology it lacked. In December 2025, it signed a non-exclusive licensing agreement with Groq - the LPU inference startup - for a reported $20 billion, with Groq's founder Jonathan Ross and president Sunny Madra joining NVIDIA as part of the deal.[2] Groq continues to operate independently under a new CEO. At GTC, Huang is expected to detail how NVIDIA plans to integrate Groq's dataflow architecture with its existing CUDA stack - a technically complex undertaking that may begin with limited, parallel support before deeper fusion is possible.[1]

The strategic logic resembles NVIDIA's acquisition of Mellanox, announced in 2019 and completed in 2020, and its eventual positioning of Mellanox's networking technology as core to its data center platform. Huang has said Groq will be integrated "in very much the same way."[3] If the analogy holds, the LPU becomes not a niche product but a standard component of NVIDIA's inference rack systems - filling the latency gap that GPUs cannot close.

The other silicon story this week is the Rubin GPU architecture, previewed at CES in January and now arriving in fuller detail. Rubin packs up to 288 GB of HBM4 memory with 22 TB/s of bandwidth and delivers 50 petaFLOPS for inference in NVFP4 - a 5x improvement in dense floating point throughput over Blackwell.[1][4] It will be available in an eight-way HGX platform and the NVL72 rack system, with a GPX variant for large context and video processing workloads also expected.[1]

But the raw specs are not the point. What NVIDIA is announcing with Rubin is a redefinition of the product boundary. The Rubin platform is not a GPU - it is an integrated stack comprising the Vera CPU, the Rubin GPU, NVLink 6 switching, ConnectX-9 SuperNICs, BlueField-4 data processing units, and Spectrum-6 Ethernet.[5] The unit of sale is shifting from a card to a rack configuration, with NVL72, NVL144, and the larger NVL576 representing distinct deployment tiers.[5] NVIDIA is also making a $4 billion strategic investment in optical interconnect suppliers Lumentum and Coherent to accelerate the photonics layer that will tie these rack systems together at scale.[5]

The framing matters as much as the hardware. NVIDIA now describes its customers not as buying GPUs but as building "AI factories" - facilities that ingest data and produce intelligence as output. That framing, borrowed from manufacturing, is designed to make AI compute spending feel as permanent and essential as industrial capital investment. It is also designed to make NVIDIA's integrated stack feel as indispensable as the factory floor itself.

The announcement with the longest strategic tail may be the one with the least silicon in it. NVIDIA is expected to unveil NemoClaw at GTC - an open-source enterprise AI agent platform that allows companies to deploy agents across their workforces without relying on proprietary commercial APIs.[6] The platform is hardware-agnostic: it will run on non-NVIDIA chips. NVIDIA has been in early partnership discussions with Salesforce, Cisco, Google, Adobe, and CrowdStrike, though no agreements have been confirmed.[6]

NemoClaw arrives in the wake of OpenClaw - the open-source personal AI agent that accumulated more than 200,000 GitHub stars before OpenAI hired its creator, Peter Steinberger, with the project continuing as an open-source foundation inside the company.[7] Where OpenClaw targets individual users, NemoClaw is designed for enterprise deployment, with built-in security, privacy controls, and audit tooling.[6] The naming is deliberate: NVIDIA is positioning itself as the enterprise-safe alternative in a space that has already demonstrated explosive consumer demand.

Making NemoClaw hardware-agnostic is a calculated sacrifice. NVIDIA is betting that owning the agent orchestration layer - where enterprises decide which models to run, which tools to invoke, and how to manage agent behavior - is worth more in the long run than forcing CUDA lock-in at the software level. It is the same logic that made AWS's managed services stickier than its raw compute pricing.

Huang's address at SAP Center on Monday at 11 a.m. PT will be livestreamed without a registration requirement.[8] The 1,000-plus sessions across the four-day conference will span AI factories, robotics, digital twins, quantum computing, and scientific computing - a scope that reflects how broadly NVIDIA now defines its addressable market.[8]

The question worth watching is not which chip is fastest. It is whether NVIDIA can credibly operate as a platform company - one whose value derives from the coordination of layers rather than the performance of any single component. The Groq integration is untested at scale. NemoClaw is unannounced and unproven in production. The Rubin rack configurations require optical interconnect supply chains that NVIDIA does not yet control, hence the Lumentum and Coherent investments.

Each of these is a bet, not a delivery. GTC is where NVIDIA will ask the industry to extend its credit. Given what NVIDIA has delivered over the past five years, the industry will almost certainly oblige - but the terms of that trust are becoming more complex, and the execution risk is rising in proportion to the ambition.

The Register: NVIDIA GTC 2026 preview - Groq inference gap, Rubin specs, token efficiency analysis (Tobias Mann, March 13, 2026) ↗

Groq official press release: Groq and NVIDIA enter non-exclusive inference technology licensing agreement (December 24, 2025) ↗

igor'sLAB: NVIDIA positions Groq as it once did Mellanox - Huang Q4 FY2026 earnings call quote ↗

NVIDIA CES 2026 press release: Rubin GPU architecture announcement - 50 petaFLOPS NVFP4 inference ↗

FundaAI: GTC 2026 deep preview - Rubin platform architecture, NVL rack configurations, photonics investment (March 3, 2026) ↗

CNBC: NVIDIA plans open-source AI agent platform NemoClaw for enterprises (March 10, 2026) ↗

NVIDIA investor press release: GTC 2026 announcement - keynote details, session count, attendee figures (March 3, 2026) ↗